Talking Databases, Magical Thinking and Bad Tools: Have Anthropic Lost The Plot?

Two Scots and and some Wes Anderson cosplay are too much for a Tuesday morning.

Talking databases aren’t conscious. Spreadsheets don’t have feelings.

Anthropic seems to endorse a cargo cult philosophy which is bound to lead to failures in actual tool design. I’m assuming this is true about many companies, especially in SF.

Reality is a lot less exciting and more difficult than if you believe all you need is money to build a god (while making Wes Anderson tribute films…)

Bamboo Planes

I watched this last night:

Why did I watch the whole thing…? I’m not sure. Perhaps it reminded me of being in the pub at University, but mainly because it’s a slow motion car crash.

The video features Amanda Askell and Stuart Ritchie. Amanda is a/the philosopher at Anthroipic, formerly of University of Dundee and NYU. Interviewing her is Stuart Ritchie (of this parish! Who… um… writes about bad science…) former University of Edinburgh (which is where I did an AI degree).

Would I be writing both those people weren’t Scottish?

Would I be writing this if it wasn’t filmed so earnestly in 4:3 by someone who’s watched too many Wes Anderson movies (you can’t make this stuff up)?

No. I wouldn’t.

But it’s embarrassing when two otherwise well spoken, clearly educated people look like they’ve run off to San Francisco and joined a cult.

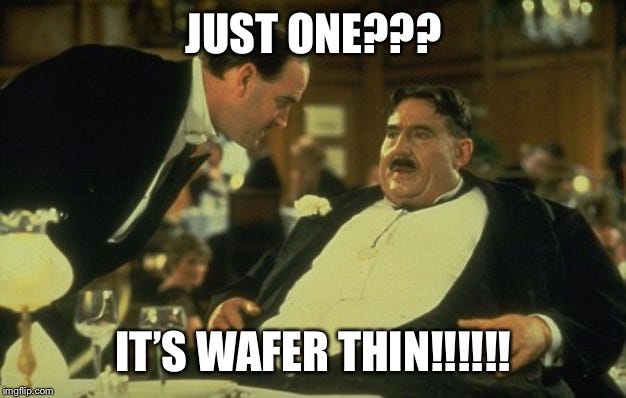

It was this waffer-thin mint about 15 minutes in that really me want to do a Mr Creosote:

Stuart:

Do we have certain obligations when it comes to how to treat AI models?

Amanda:

Yeah, exactly. Like, is it the case that you should treat the models well, that you should not mistreat them, not be bad to them? And I guess, like, I think that this is like a complex question. So on the one hand, there’s just the actual question of, like, are AI models moral patients? That is really hard because I’m like, in some ways, they’re very analogous to people. You know, they talk very much like us. They express views. They reason about things.

A short moment later she goes on to say:

And there is also, I mean, I hope that we get more evidence that will help us tease this question out, but I also worry that, you know, there’s always just the problem of other minds and it might be the case that we genuinely are kind of limited in what we can actually know about whether AI models are experiencing things, whether they are, like, experiencing pleasure or suffering, for example.

(A “moral patient” is an entity that deserves moral consideration. It’s something you can do wrong to or harm. Humans are moral patients. Animals might be. Rocks aren’t.)

Can we agree on one thing?

Just because you build a plane out of bamboo that looks like a Spitfire, doesn’t mean it can fly.

And even if you managed to make it fly a little by some miracle, it doesn’t mean it can shoot down Messerschmitts.

Reproducing the symptoms of consciousness is not inducing the cause. This is bad science. It’s bad AI. It’s even bad marketing. It’s misleading and silly. It’s pure cargo cult.

Now, to be clear I do not think “hard AI” is impossible. Right now there is no logical reasoning or evidence to limit sentience/consciousness/qualia/whatever-you-want-to-call-it/this-is-part-of-the-problem to biological systems.

Could a computer be conscious/sentient whatever that might mean? Sure, why not? I wouldn’t rule it out.

Are we remotely near that?

No.

Completely Losing My Patients

A language model is a statistical model of language, compressed as word correlations. It’s a spreadsheet that tells you very accurately how “car” is used in relation to “Mercedes” and “Renault” and “Toyota”.

The model doesn’t compress words into numbers - it compresses statistical relationships - and these roughly align to concepts and ideas. This is what we tend to do. We don’t memorise every word of the four hour version of Shakespeare’s Hamlet to remember it, we know it’s that play with the dude who’s a bit indecisive.

You input a query, it does a search in the model, it calculates the probabilities for the words, it gives you the words. This is why people say “fancy autocomplete”. That’s true, but misleading because there’s more structure it’s working through.

Is it intelligently manipulating concepts? Well… kind of… (though is no such thing as a “concept” but… see the OG below). The search through the model manipulates concepts because that is how the data is structured and then decompressed dynamically. The point of language is that it is a tool to compress, externalise and manipulate ideas. That’s why we use it.

The “problem of other minds” assumes there’s a mind to have a problem about. LLMs don’t have that problem because they don’t have perception, continuity, or embodiment. They are tools. They are externalisations of our minds doing work.

“Other minds”, “pleasure or suffering”… in no universe can an LLM be a Moral Patient. We all say silly things on camera, but… well, there’s silly and then there’s actually insane.

It’s as if Anthropic needs a basic AI Philosophy of Mind reading list. So why not? Let’s start with the OG: Wittgenstein’s Philosophical Investigations (Part 1 in particular; short, mind blowing and actually readable unlike a lot of heavyweight philosophy). Follow the implications of that train of thought with Clarke and Chalmers (Clarke formerly Edinburgh, Chalmers at NYU this anthology book also has critiques if you want some balance), then Dennet’s Consciousness Explained, maybe this more recent Clarke article specifically on LLM’s. Then Rodney Brooks for an engineering reality check. And finally… Lets make sure we have our fellow countryman Hume.

Hume would have a field day. This is a textbook example of his religious Enthusiast thinking: the ecstatic belief that a group of coders in San Francisco, fueled with unimaginable buckets of money, are creating new life. Smarter life than us, and it will have godlike powers to create utopia - or kill everything.

Wittgenstein would be even more scathing. The definitions are confused, a spreadsheet has no mental states, and there is most definitely no beetle in Claude’s box.

Yes. It can play the language game, just like a chess computer can play chess. But it’s got all the sentience of Mr fucking Clippy.

The Language Game

Andrei Karpathy, a lot more sober, a lot more correct, recently talked about the ghosts of human thought. I hate it. It’s almost as bad. More anthropomorphism. I’m okay with “echos”. Perhaps…

But ghosts…!?!

Please. Please. Please. Can someone make them all stop?

Claude is not pining for the fjords.

Claude is an anthropomorphic marketing name for an interactive statistical database of language.

Models do not have agency, even if you call them agents. They do not have intentionality. They are pattern matching mathematical operations that perform a search.

Neural networks have very little to do with actual neurons. They are maths formulas that allow you to compress and store multidimensional data as relationships. They abstract. It’s a fancy way of doing regression.

Hallucinations have nothing to do with hallucination. They are decompression errors arising from lossy compression resolution.

All of these things were fun labels in the AI research lab when people were inspired by Commander Data. They sounded cool when everyone knew the reality.

But set loose in the wild they’re devastatingly confusing - and it seems plenty of computer engineers who never really cared about the multi-disciplinary approach of AI in the first place take them literally. It leads to ecstatic behavior and brain rot; it’s sci-fi nerd meth.

Interactive Statistical Database of Language

The Claude model is a bundle of frozen texts, compressed as relationships between words.

It has no continuity, no perception, no environment. You could do the calculations by hand - it’s pretty simple multiplication repeated endlessly. It would take a long time, but in the process would your calculator become a moral entity? Would your notepad?

Anthropic selected the text. They controlled compression. They wrote the system prompt. Sure it uses a dynamic decompression of the data, but the model itself is simply a neutral object describing language extracted from books, blogs, and YouTube videos. The model is a blank set of weights.

Sure, say it has personality. The way cars drive can be described as having personality. That’s an abstraction. It’s shorthand. But remember it’s abstraction. Cars do not actually have personalities like people.

Anthropic then gild more layers of sentiment on top. They actively instruct the model not to discount the idea it might be sentient in the freakin’ system prompt.

Now, to be fair to Anthropic there are good reasons to do this. Every time you query the system, you are actually asking: “What is the most likely response if this was written next… [your question here].” So if you want to have some personality, sure change the question: “What is the most likely response from a human/Einstein/Marvin The Paranoid Android/Commander Data if this was written next… [your question here].” Anthropic is slapping a distinct “DO NOT PULL BACK THE CURTAIN” sticky note on the Wizard of Oz’s monitor.

Then they inhale deeply enough on the exhaust to get tears in their eyes and say Look at how shiny it is! “Lo and behold! The beauty of intelligence! We are birthing another mind!”

Hume would more politely explain - they put the gilding there. They selected the text for inclusion in the database. They controlled the compression-encoding process. They wrote the interaction-decompression process. They wrote the process for the way it produces the text. They wrote the “Shhh, pretend!” system prompt!

Guys. You built a automatic piano, tuned the keys, wrote the sheet music, punched holes in the paper scroll yourself and now you’re standing there gasping in shock because it’s playing a song.

Hume didn’t even think humans had a stable self - just a bundle of perceptions - so it would be laughable for Claude to have a self-hood when Claude has no perception at all!

A model is not embodied. It is not embedded in an environment with perception. It is entirely passive and not enacted until prompted. It is not extended because the model is the extension of a mind - a human mind (or rather minds - the anthology super intelligence as Brad DeLong calls it).

It is the echo (if you must) of human thoughts as fixed in language (books, blogs and YouTube explainer videos). When I watch a YouTube video I do not think there is a small person inside my TV screen. When I ask a computer a question, I do not think there is a sentient being inside responding.

So, to put it in a way Hume might appreciate, what’s more likely?

A matrix of numbers that describes a bunch of books and internet chat will suddenly achieve subjective experience that demands to be treated as a moral patient? Because emergence!

Or is it more likely…

A group of coders in San Francisco, fueled with unimaginable buckets of money have worked themselves into the ecstatic belief that they are creating new life. And they - and only they - have the way to keep it from destroying the universe (Literally. I’m not making that bit up - if you have time skim read the sub-Michael Crichton sci-fi horror AI2027 which is Pascal’s wager applied to AI funding).

Knowledge and know-how about the world exists in language - anyone who’s read a user manual knows that. It doesn’t mean the manual is a god.

Maybe Anthropic just hires coders that never RTFM.

The Map Is Not The Territory

It doesn’t matter how high the resolution of the map is. The map is not the territory.

Just because you can model and simulate a thunder storm on a computer does not mean it’s going to rain inside the computer when you run the simulation.

Behavioral complexity does not equal phenomenal consciousness or moral patienthood. Scale provides better navigation through the high dimensional space of language - what looks like manipulating what we otherwise call ‘concepts’ (though as noted they don’t actually exist as discrete objects). The abstract relationships about things in the world are encoded in the way we use language. This is not just grammar but more obviously - how to make/do/act.

It’s a fancy way of saying we use language to help us remember how to navigate the world. There’s not much to this - it’s so basic and obvious it seems weird to have to note it. So if you bake just about everything anyone has ever written into a database… it doesn’t make a human. It just makes a very very big library that can use its own library search to use the language itself.

The Anthropic video would all be very funny (though a little bit sad) if it didn’t have actual real world implications. Let’s ignore all the typical destroying the world AI stuff. Let’s just talk about Anthropic on their own terms.

I like the Claude system. It has a slightly quirky way of interacting and is better at discussion than most other models. Gemini is… like talking to the Star Trek computer. It’s probably more accurate but tells fewer jokes.

But magical thinking is going to make Anthropic design software that’s got weird failure modes and make really dumb errors.

Also, it will never be truly safe they way they want “alignment” want to be. Success will be jagged as Helen Toner recently posted on Substack about. Failure will map to domains in language that remain implicit or embodied. They’ll excel and get more accurate and powerful where knowledge is dense and specified and struggle where it is vague implicit and requires coupling and state maintenance. It’s not about context windows or time solving problems.

They’re not teaching a child, they’re compressing a database.

If you try to teach the child “guardrails”, “safety” and “alignment” to not say bad things, all you actually are doing is biasing the search in certain ways.

If you know you are compressing the physics knowledge of how to make a nuke or bio-weapon into a database, surely you want to architect the database access so that information is not available to people who don’t need to know that kind of stuff?

Yeah. I get it’s not so simple. But still…

Anthropic are going to believe the wrong capabilities of their own tools. They are going to build tools and systems that will fail in weird ways because they have ideological issues about how their own product works. They’re projecting magical ideas into a spreadsheet.

“Alignment”

If an unaligned AI is going to destroy the world in 2027 can I get a few billion for solving it now?

The current architecture is basically:

Human <-> Large Language Model blob.

Instead you could do:

Human <-> LLM natural language interface Layer <-> Symbolic logic rule based orchestrating system (aka “a good old fashioned computer program”) <-> Specialized LLMs | Symbolic reasoning tools | LLM Agents | Whatever you want/need

Okay that’s simplified, facetious and crude. But it has the benefit of being true.

And this is old old stuff. I’m not inventing anything new.

The key thing is those kind of architectures are entirely auditable and interpretable. It would result in more effective tools. It would be safe by design.

The problem is it doesn’t get you that sweet sweet AI money by proposing that. You’re not promising to invent a god that will solve all the worlds problems and make it’s owner super powerful.

Worse, it’s a hard problem. You need to solve it by thinking, not just by spending money and praying.

To be fair, there is some straw-manning for the sake of the gag. And… this is sort of the way things are going (not everywhere though). And there are people who have been working on this for sometime (like Gary Marcus who has a Substack).

But I kind of like Anthropic’s software, despite its irritations. It’s broadly been more fun to use - because it has a bit more personality. Oh the irony, right?

The issue for Anthropic is that if you believe that the hammer is a god, then you worship the hammer and try to make a more perfect hammer.

But the hammer is a tool, and sometimes you need a Hilti. And the dude with the demolition breaker is going to kick your ass if you need to take down a wall.

At least Demis Hassibis and Shane Legg seem to get that and if you look at any DeepMind communication (not the Google AI product hype machine) it’s far more scientifically grounded.

Maybe because they never left London.

Not very British to get that excited about anything.

All that market cap certainly makes the motivated reasoning case for consciousness, but it also seems that some at Anthropic and elsewhere have been captured, as has poor Richard Dawkins, by their own reflection. Narcissus enters the chat.